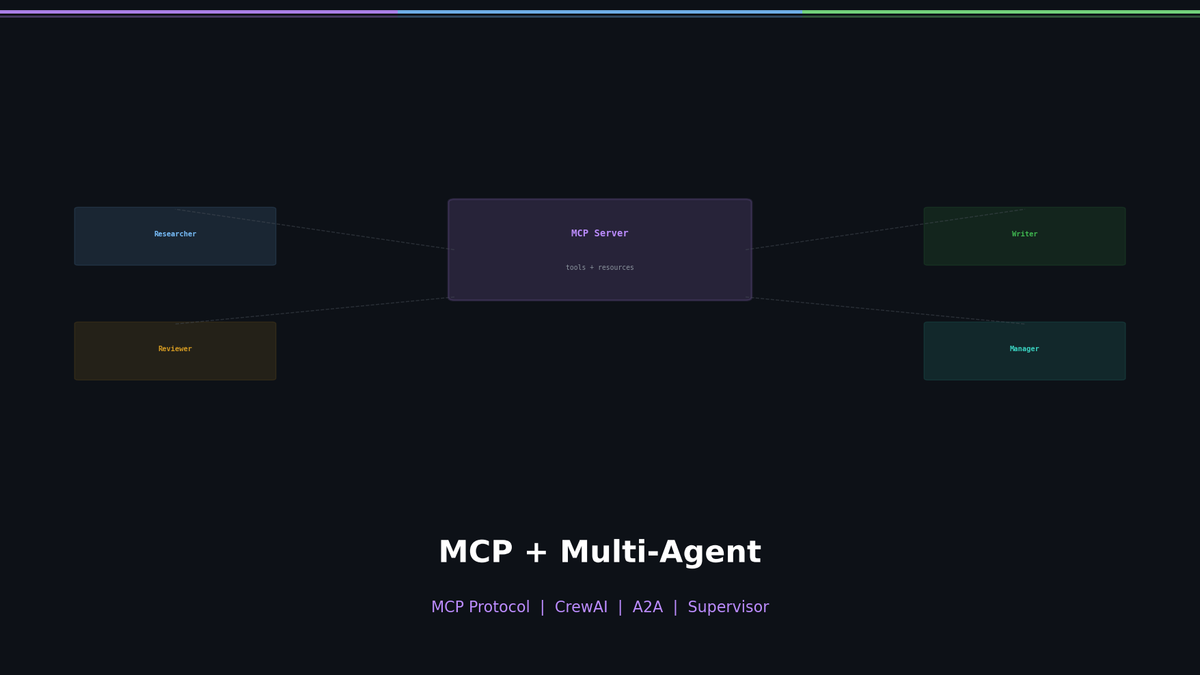

MCP + Multi-Agent — How Agents Share Tools and Collaborate

Standardize tools with MCP, build role-based multi-agent systems with CrewAI. A2A protocol and architecture selection guide.

MCP + Multi-Agent — How Agents Share Tools and Collaborate

A single agent is powerful. But complex tasks in the real world are hard to solve with just one agent. What if you need to research, code, and review all at the same time? The answer is having multiple agents take on their own roles and collaborate.

In this post, we cover how to standardize tool integration with MCP (Model Context Protocol), build multi-agent teams with CrewAI, and enable agents to communicate with each other using A2A (Agent-to-Agent) patterns.

Series: Part 1: ReAct Pattern | Part 2: LangGraph + Reflection | Part 3 (this post) | Part 4: Production Deployment

The N×M Integration Problem

Related Posts

LLM Inference Optimization Part 4 — Production Serving

Production deployment with vLLM and TGI. Continuous Batching, Speculative Decoding, memory budget design, and throughput benchmarks.

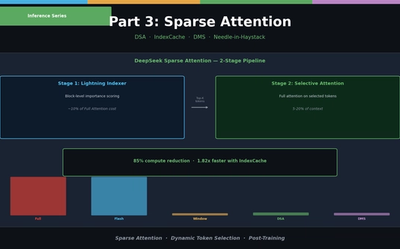

LLM Inference Optimization Part 3 — Sparse Attention in Practice

Sliding Window, Sink Attention, DeepSeek DSA, IndexCache, and Nvidia DMS. From dynamic token selection to Needle-in-a-Haystack evaluation.

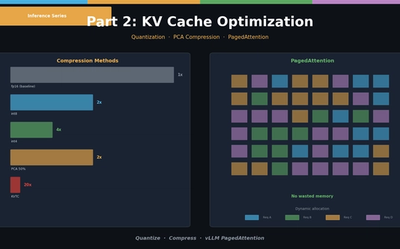

LLM Inference Optimization Part 2 — KV Cache Optimization

KV Cache quantization (int8/int4), PCA compression (KVTC), and PagedAttention (vLLM). Hands-on memory reduction code and scenario-based configuration guide.