LLM Inference Optimization Part 3 — Sparse Attention in Practice

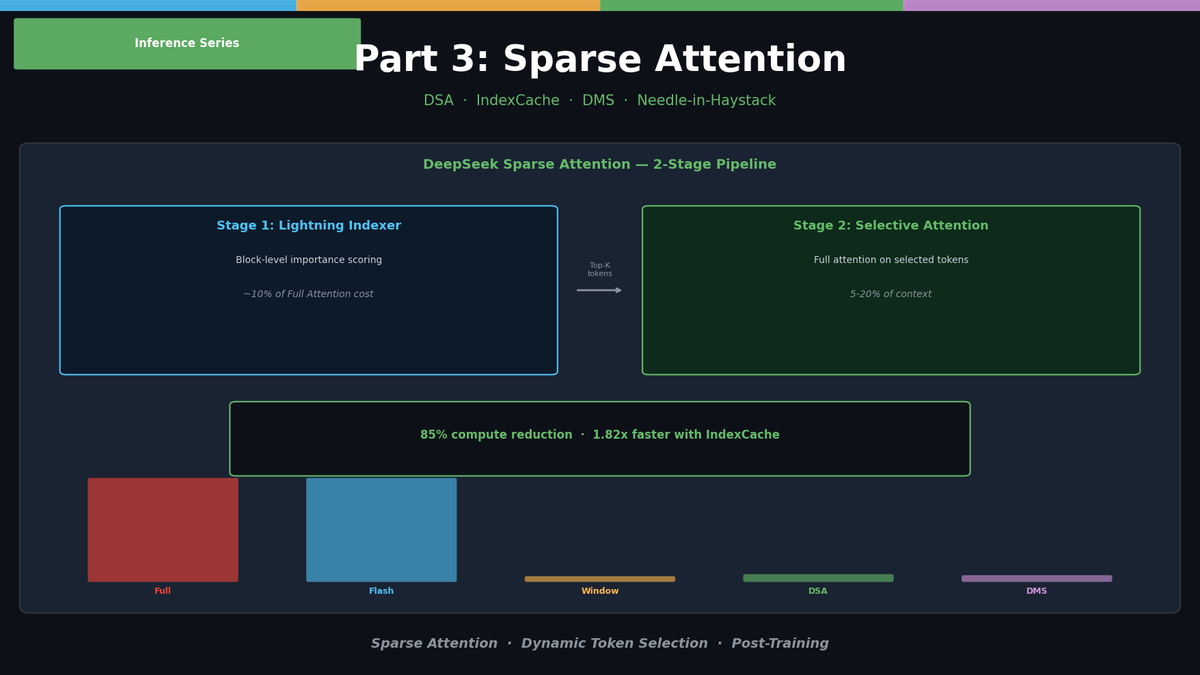

Sliding Window, Sink Attention, DeepSeek DSA, IndexCache, and Nvidia DMS. From dynamic token selection to Needle-in-a-Haystack evaluation.

LLM Inference Optimization Part 3 — Sparse Attention in Practice

In Part 2, we covered KV Cache quantization, compression, and PagedAttention. Those techniques focus on reducing stored data. Part 3 shifts direction and tackles reducing the computation itself with Sparse Attention.

The key question: "Do we really need every token?"

In most cases, the answer is no. In a 128K context, the tokens that the current token actually needs to attend to are only 5–20% of the total.

The Problem with Full Attention

Related Posts

Self-Evolving AI Agents — The New Paradigm of 2026

GenericAgent, Evolver, Open Agents — comparing 3 self-evolving agent frameworks that learn, adapt, and grow without human coding.

Build Your Own LLM Knowledge Base — A Karpathy-Style Knowledge System

Complete guide to building a permanent personal knowledge system with Obsidian + Claude Code. Wiki + Memory dual-axis architecture.

Why Karpathy's CLAUDE.md Got 48K Stars — And How to Write Your Own

One markdown file raised AI coding accuracy from 65% to 94%. Analyzing Karpathy's 4 rules and practical writing guide.