LLM Inference Optimization Part 1 — Attention Mechanism Deep Dive

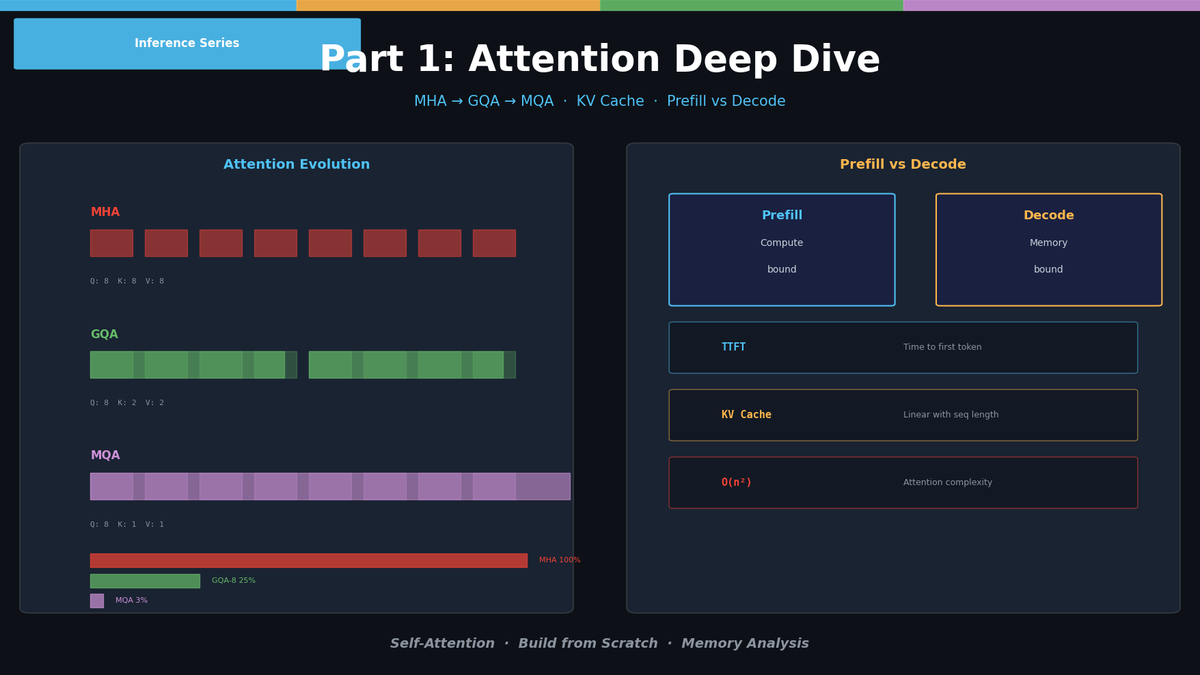

Build Self-Attention from scratch. Compare MHA → GQA → MQA evolution in code. KV Cache mechanics and Prefill vs Decode analysis.

LLM Inference Optimization Part 1 — Attention Mechanism Deep Dive

When you deploy an LLM to a production service, the first wall you hit is inference speed and memory. No matter how good the model is, it's useless if it's slow and expensive. In this series, we dissect the core bottlenecks of LLM inference one by one and cover practical optimization techniques with code.

In Part 1, we implement the Attention mechanism from scratch — the starting point of all optimizations — and compare the evolution from MHA to GQA to MQA directly in code.

Self-Attention — Implementing from Scratch

Basic Structure

Related Posts

Self-Evolving AI Agents — The New Paradigm of 2026

GenericAgent, Evolver, Open Agents — comparing 3 self-evolving agent frameworks that learn, adapt, and grow without human coding.

Build Your Own LLM Knowledge Base — A Karpathy-Style Knowledge System

Complete guide to building a permanent personal knowledge system with Obsidian + Claude Code. Wiki + Memory dual-axis architecture.

Why Karpathy's CLAUDE.md Got 48K Stars — And How to Write Your Own

One markdown file raised AI coding accuracy from 65% to 94%. Analyzing Karpathy's 4 rules and practical writing guide.