TransformerLens in Practice: Reading Model Circuits with Activation Patching

Using TransformerLens to directly manipulate model activations, we trace which layers and heads causally produce the answer. A hands-on guide to activation patching.

TransformerLens in Practice: Reading Model Circuits with Activation Patching

In the previous post, we treated Lens as a window into the model's intermediate thoughts.

But "reading" alone cannot answer the most important question:

Does the model actually *use* this information?

Just because a hidden state at some layer contains "Paris" does not mean that layer causally contributes to the final answer. Information can be present but unused. A layer might hold the right answer in its representation, yet the model might arrive at its output through entirely different pathways.

To determine what actually matters, we need more than visualization. We need causal intervention: directly manipulating the model's internals and observing how the output changes.

1. TransformerLens: A Surgical Toolkit for Interpretability

TransformerLens is a mechanistic interpretability library created by Neel Nanda. Its core capability is attaching hooks to every internal activation in a Transformer, allowing you to read, modify, and replace activations at will.

pip install transformer_lensHookedTransformer: A Model Wired with Hooks

Related Posts

MIRAGE — Do Multimodal AIs Actually "See" Images?

GPT-5.1, Gemini 3 Pro, and Claude Opus 4.5 retain 70-80% of benchmark scores without any image input. A 3B text-only model outperforms all multimodal models and radiologists on chest X-ray benchmarks. Stanford MIRAGE paper review.

InternVL-U: Understanding + Generation + Editing in One 4B Model -- A New Standard for Unified Multimodal AI

Shanghai AI Lab's InternVL-U. A single 4B parameter model handles image understanding, generation, editing, and reasoning-based generation. Decoupled visual representations outperform 14B BAGEL on GenEval and DPG-Bench.

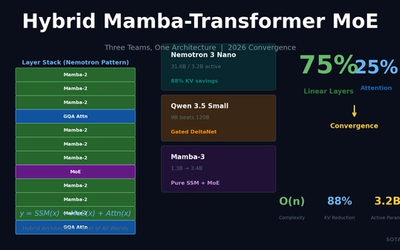

Hybrid Mamba-Transformer MoE: Three Teams, One Architecture -- The 2026 LLM Convergence

NVIDIA Nemotron 3 Nano, Qwen 3.5, and Mamba-3 independently converge on 75% linear layers + 25% attention + MoE. 88% KV-cache reduction, O(n) complexity for long-context processing.