Hybrid Mamba-Transformer MoE: Three Teams, One Architecture -- The 2026 LLM Convergence

NVIDIA Nemotron 3 Nano, Qwen 3.5, and Mamba-3 independently converge on 75% linear layers + 25% attention + MoE. 88% KV-cache reduction, O(n) complexity for long-context processing.

The Hybrid Mamba-Transformer-MoE Architecture: Three Teams, One Conclusion

In March 2026, something remarkable happened. Three independent teams -- NVIDIA, Alibaba (Qwen), and the Mamba research group -- arrived at the same architectural conclusion almost simultaneously.

"Neither pure Transformer nor pure SSM. Mix them at roughly 75% linear layers to 25% attention layers. Add MoE routing on top."

NVIDIA released Nemotron 3 Nano. Qwen shipped the 3.5 Small series. The Mamba team presented a theoretical framework (Mamba-3) at ICLR 2026. If one team had reached this conclusion, it could be coincidence. When three do it at once, it is a paradigm shift signal.

This post covers the background behind this convergence, the technical details of each architecture, and what it means for AI infrastructure going forward.

Background: The Cost Problem with Pure Transformers

For six years after GPT, the Transformer has been essentially the only LLM architecture. But as scale increases, two fundamental problems become harder to ignore.

1. Quadratic Complexity of Self-Attention

Self-attention -- the core of the Transformer -- requires O(n^2) computation with respect to sequence length n. Every token attends to every other token. The scaling looks like this:

| Sequence Length | Attention Compute (relative) |

|---|---|

| 1,024 | 1x |

| 8,192 | 64x |

| 32,768 | 1,024x |

| 131,072 | 16,384x |

8x longer means 64x more expensive. A 128K context requires over 16,000x more attention compute than 1K.

2. KV-Cache Memory Explosion

During inference, Transformers must keep the Key-Value vectors for every previously generated token in memory (the KV-cache). This cache grows linearly with sequence length.

A 70B model processing a 128K context can consume tens of gigabytes of GPU memory on KV-cache alone. This limits batch sizes, caps concurrent users, and makes mobile or edge deployment essentially impossible.

These two problems motivated the State Space Model (SSM) line of research.

State Space Models Recap: The Road to Mamba

The core idea behind State Space Models is that "not every token needs to see every other token." Instead, information is compressed into a fixed-size state, which is updated sequentially as new tokens arrive.

An analogy: Transformers are like taking an exam with every textbook open on your desk (full reference). SSMs are like reading all the textbooks first, then answering from your summary notes (compressed state).

S4 (2022)

The Structured State Space Sequence Model discretized continuous-time state equations for sequence modeling. It achieved O(n) complexity and could handle long sequences, but it could not beat Transformers on language tasks. The state transition matrices were fixed (input-independent), making it weak at content-based reasoning.

Mamba (2023)

Gu and Dao's Mamba tackled S4's biggest weakness head-on. It made the state transition matrices A, B, C input-dependent (selective state spaces). The model could now choose what to remember and what to forget based on content.

Key properties:

- O(n) complexity: Compute scales linearly with sequence length

- Fixed-size state: No KV-cache. State size is independent of sequence length

- Hardware-aware implementation: Optimized data movement between GPU SRAM and HBM

Mamba-2 (2024)

Mamba-2 reformulated the core computation in terms of semiseparable matrices and discovered the Structured State Space Duality (SSD). This proved a mathematical equivalence between Mamba's selective scan and linear attention. This theoretical discovery opened the door to hybrid architectures.

DeltaNet / Gated DeltaNet

DeltaNet is a linear attention variant that applies the delta rule (an error-correcting learning rule) to linear attention. Gated DeltaNet adds a gating mechanism for finer-grained control over information flow. Like Mamba-2, it has O(n) complexity while offering expressiveness closer to full attention.

The Hybrid Insight: Why Mixing Works Better

Pure SSMs (Mamba, DeltaNet, etc.) have the advantage of O(n) complexity, but a quality gap versus pure Transformers persisted. Why?

The Fixed-State Information Bottleneck

An SSM's state is a fixed-dimensional vector. No matter how long the sequence, the state size does not change. This is like having a limited number of pages in your summary notes. Some information is inevitably lost.

Tasks where this becomes a problem:

- In-context learning: Tasks that require precise reference to examples given in the prompt

- Exact retrieval/copying: Tasks that need to reproduce a specific part of the input verbatim

- Complex dependency tracking: Tasks involving precise relationships between distant tokens

For these tasks, full attention -- where every token can directly reference every other token -- has a structural advantage.

The key hybrid insight: Most layers only need linear-complexity SSM/linear attention. Only a small number of layers need full attention.

Think of it this way. When reading a book, most of the time you follow the narrative sequentially (the SSM's job). But occasionally you need to flip back to an earlier page to check an exact detail (attention's job). You do not need to re-read from page one for every sentence.

Let us now look at the three implementations of this idea.

Case 1: NVIDIA Nemotron 3 Nano (30B-A3B)

NVIDIA's Nemotron 3 Nano is one of the most complete implementations of the hybrid architecture.

Architecture Breakdown

| Spec | Value |

|---|---|

| Total Parameters | 31.6B |

| Active Parameters | 3.2B (~10%) |

| Total Layers | 52 |

| Mamba-2 Layers | 23 (44%) |

| MoE FFN Layers | 23 (44%) |

| GQA Attention Layers | 6 (12%) |

| Context Window | 128K tokens |

Out of 52 layers, only 6 are attention layers. The remaining 46 use Mamba-2 (linear complexity) and MoE (conditional computation).

Why This Ratio?

NVIDIA ran ablation experiments varying the attention ratio from 0% to 100%:

- 0% (pure Mamba): Significant degradation on in-context retrieval tasks

- 10-15% attention: Performance recovery to match pure Transformers on most benchmarks

- 25%+: Diminishing returns from additional attention layers

6/52 = roughly 11.5% attention is the sweet spot from these experiments.

MoE Configuration

The 23 MoE layers each contain 16 experts, with top-4 activated per token. This maintains the knowledge capacity of a 31.6B model while keeping actual computation at the 3.2B level.

Performance Summary

Nemotron 3 Nano significantly outperforms dense models of similar active parameter count and competes with models 10x larger. Highlights:

- Averages 5-8%p higher benchmark scores than Phi-4-mini (3.8B dense)

- Matches or exceeds Llama 3.1 8B performance with only 3.2B active parameters

- Runs real-time inference on NVIDIA Jetson edge devices

The KV-cache savings are dramatic. Only 6 attention layers require KV-cache, so KV-cache memory usage drops by roughly 88% compared to a pure Transformer.

Case 2: Qwen 3.5 Small Series -- The Power of Gated DeltaNet

Alibaba's Qwen team took a different linear layer -- DeltaNet-family linear attention -- to build their hybrid.

Architecture Core

The key design in Qwen 3.5 Small is a 3:1 ratio of Gated DeltaNet to softmax attention. For every 4 layers, 3 use Gated DeltaNet (linear complexity) and 1 uses full softmax attention. This puts the attention ratio at 25%, matching the theoretical optimum from Mamba-3 exactly.

What is Gated DeltaNet?

Recall standard softmax attention:

Attention(Q, K, V) = softmax(Q * K^T / sqrt(d)) * V

The softmax is what causes O(n^2). Linear attention removes the softmax and replaces it with a kernel function phi:

LinearAttn(Q, K, V) = phi(Q) * (phi(K)^T * V)

The key trick is the order of operations. Computing phi(K)^T * V first yields a d x d matrix. Multiplying phi(Q) by that matrix costs O(n * d^2) -- linear in sequence length.

DeltaNet applies the delta rule on top of this. When updating the state S at each step, it "erases the old relevant information" before "writing new information":

S_t = S_{t-1} - beta_t * (S_{t-1} * k_t) * k_t^T + beta_t * v_t * k_t^T

This is like an associative memory that overwrites old associations with new ones. Gated DeltaNet adds a gating mechanism (alpha) for finer control over what to retain and what to discard.

9B Beats 120B

The most striking result from the Qwen 3.5 Small series is the 9B model's performance.

| Benchmark | Qwen 3.5 Small 9B | GPT-OSS-120B | Notes |

|---|---|---|---|

| MMLU-Pro | Higher | Lower | 9B exceeds 120B |

| HumanEval+ | Higher | Comparable | Coding ability on par |

| Throughput | ~10x | 1x | Active parameter difference |

A 9B hybrid model beating a 120B dense Transformer on benchmarks demonstrates that this architectural shift is not just an efficiency play -- it can deliver genuine performance gains.

Case 3: Mamba-3 -- The Theoretical Contribution at ICLR 2026

The team led by Albert Gu and Tri Dao -- the original Mamba authors -- presented Mamba-3 at ICLR 2026. It is more theoretical framework than production model.

Key Contributions

1. Theoretical Derivation of the Optimal Ratio

The Mamba-3 paper provides a mathematical answer to "why is ~75% linear layers + ~25% attention layers optimal?"

The core argument:

- Linear layers (SSM/linear attention) are optimal for processing sequential "flow" at O(n) complexity

- Full attention is O(n^2) but irreplaceable for tasks requiring precise retrieval

- Analyzing the actual task distribution of language models shows that precise retrieval is needed in roughly 20-30% of computation

- Therefore, 25% attention suffices, and replacing the remaining 75% with linear layers dramatically reduces overall inference cost

2. Attention Sink Analysis

An interesting finding concerns the optimal placement of attention layers. Rather than distributing attention layers evenly throughout the network, concentrating them at the beginning and end is more effective. Early attention layers capture the global structure of the input, while late attention layers perform precise retrieval needed for output generation.

3. Unified Framework for SSM and Linear Attention

Extending the SSD (Structured State Space Duality) discovered in Mamba-2, Mamba-3 unifies the Mamba family and the DeltaNet family under a single mathematical framework. This proves that "which linear layer to use" is an implementation choice -- they are fundamentally the same computational class.

This explains why NVIDIA chose Mamba-2 and Qwen chose Gated DeltaNet, yet both achieve comparable results.

Performance Comparison: The Hybrid Advantage in Numbers

A side-by-side look at the key characteristics:

| Property | Nemotron 3 Nano | Qwen 3.5 Small 9B | Pure Transformer (comparable) |

|---|---|---|---|

| Total Parameters | 31.6B | ~9B | ~8B |

| Active Parameters | 3.2B | ~9B | ~8B |

| Linear Layer Ratio | 88% | 75% | 0% |

| KV-cache Size (128K) | ~12% of full | ~25% of full | 100% |

| Inference Speed (relative) | ~3-4x | ~2-3x | 1x |

| Memory Efficiency | Very high | High | Baseline |

KV-Cache Savings in Practice

This is where the biggest real-world deployment difference lies.

Consider the KV-cache size for a pure Transformer at 128K context. With 40 layers, hidden dim 4096, GQA with 8 KV heads:

KV-cache = 2 (K + V) * 40 (layers) * 128,000 (seq_len) * 512 (head_dim * n_kv_heads) * 2 (bytes, FP16) = ~10.5 GB

Nemotron 3 Nano needs KV-cache for only 6 layers:

KV-cache = 2 * 6 * 128,000 * 512 * 2 = ~1.6 GB

That is roughly 85% memory savings at the same context length. This difference directly impacts the number of concurrent users you can serve, the batch sizes you can run, and whether edge deployment is feasible.

Practical Implications: What Changes

1. Inference Cost Reduction

O(n) linear layers + conditional MoE computation + a handful of attention layers. This combination generates responses of the same quality while dramatically reducing FLOPs. For API service providers, this translates directly to margin improvement.

2. Making Long Context Practical

Processing 128K+ context at low cost becomes viable. Full codebase analysis, long document summarization, and multi-turn conversations enter the economically practical zone.

3. On-Device Deployment

KV-cache savings make LLM deployment realistic on memory-constrained mobile and edge devices. NVIDIA demonstrated real-time inference on Jetson with Nemotron 3 Nano as a proof point.

4. Training Infrastructure Changes

Training hybrid models is more complex than training pure Transformers. Mamba/DeltaNet layers and attention layers require different parallelization strategies. New training frameworks and kernel optimizations are needed, and NVIDIA's hardware-software integration capabilities become even more important in this space.

Conclusion: What the Convergence Tells Us

Since "Attention is All You Need" in 2017, the Transformer architecture has been the undisputed standard for nearly a decade. But in March 2026, the simultaneous convergence of three independent teams signals the emergence of a new standard.

The key takeaways:

- The pure Transformer era is ending. O(n^2) attention is not needed in every layer.

- 75/25 is the new default ratio. 75% linear layers + 25% attention layers is the efficiency-performance sweet spot.

- MoE is becoming essential. MoE decouples knowledge capacity from compute cost, forming the third pillar of the hybrid architecture.

- SSM and Linear Attention are the same family. Whether you use Mamba-2 or Gated DeltaNet, they are mathematically the same computational class.

From "Attention is All You Need" to "Attention is Sometimes What You Need." That is the one-line summary of the 2026 architecture trend.

References

- NVIDIA Nemotron 3 Nano Technical Report -- Nemotron 3 Nano architecture details

- Qwen 3.5 Small Series Blog Post -- Qwen 3.5 Small series announcement

- Mamba-3: The Hybrid State Space Model Framework (ICLR 2026) -- Theoretical framework for hybrid SSMs

- Mamba: Linear-Time Sequence Modeling with Selective State Spaces -- Original Mamba paper

- Mamba-2: Structured State Space Duality -- Mamba-2 and SSD theory

- Gated Delta Networks with Softmax Attention (Yang et al., 2025) -- Gated DeltaNet paper

- Attention Is All You Need (Vaswani et al., 2017) -- Original Transformer paper

Subscribe to Newsletter

Related Posts

LLM Inference Optimization Part 4 — Production Serving

Production deployment with vLLM and TGI. Continuous Batching, Speculative Decoding, memory budget design, and throughput benchmarks.

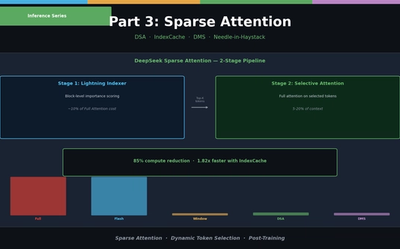

LLM Inference Optimization Part 3 — Sparse Attention in Practice

Sliding Window, Sink Attention, DeepSeek DSA, IndexCache, and Nvidia DMS. From dynamic token selection to Needle-in-a-Haystack evaluation.

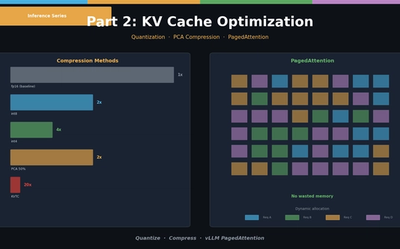

LLM Inference Optimization Part 2 — KV Cache Optimization

KV Cache quantization (int8/int4), PCA compression (KVTC), and PagedAttention (vLLM). Hands-on memory reduction code and scenario-based configuration guide.