InternVL-U: Understanding + Generation + Editing in One 4B Model -- A New Standard for Unified Multimodal AI

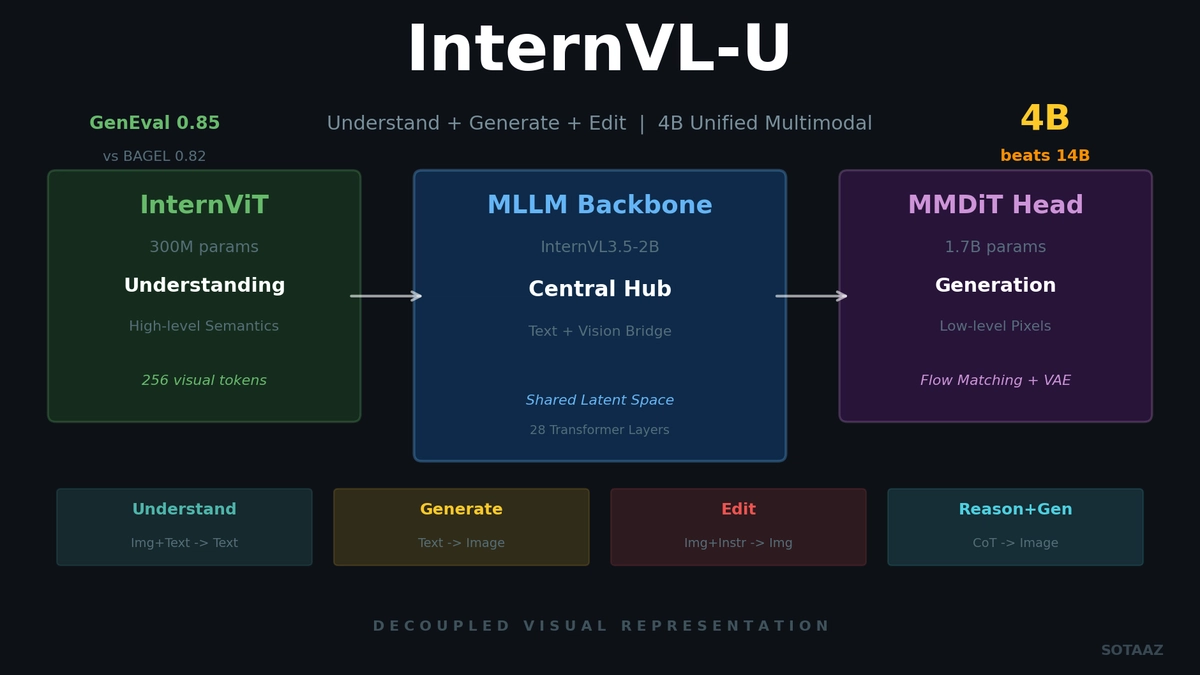

Shanghai AI Lab's InternVL-U. A single 4B parameter model handles image understanding, generation, editing, and reasoning-based generation. Decoupled visual representations outperform 14B BAGEL on GenEval and DPG-Bench.

InternVL-U: Understanding + Generation + Editing in One 4B Model -- A New Standard for Unified Multimodal AI

There's been a long-standing goal in multimodal AI: a single model that can understand, generate, and edit images. Previously, each task required a separate model. Image understanding used InternVL, generation used Stable Diffusion, editing used InstructPix2Pix -- pipelines became complex, and knowledge sharing between models was impossible.

InternVL-U, released by Shanghai AI Lab in March 2026, tackles this problem head-on. With just 4B parameters in a single model, it handles multimodal understanding, text-to-image generation, image editing, and reasoning-based generation. It outperforms the 14B-parameter BAGEL on GenEval (0.85 vs 0.82) and DPG-Bench (85.18 vs 85.07).

The secret lies in an architectural design called Decoupled Visual Representation.

The Unified Multimodal Dilemma: Limits of a Single Representation

Previous unified multimodal models (Emu3, Show-o, Janus) tried to handle both understanding and generation with a single visual tokenizer. This creates a fundamental conflict.

What Understanding requires:

- High-level semantic features (recognizing that something is a "cat")

- Object relationships (the cat is sitting "on" the mat)

- Overall scene context

What Generation requires:

- Low-level pixel information (exact colors, textures)

- Spatial precision (exact position and size of objects)

- Visual details (shadows, reflections, textures)

A single representation cannot excel at both. Optimizing for understanding degrades generation quality; optimizing for generation weakens understanding capability. This is the "representation conflict" problem.

InternVL-U's Solution: Decoupled Visual Representation

InternVL-U's core idea is simple:

Use different visual representations for understanding and generation.

| Pipeline | Component | Purpose | Feature Type |

|---|---|---|---|

| Understanding | Pre-trained ViT (InternViT-300M) | Image recognition/reasoning | High-level semantic features |

| Generation | VAE (Qwen-Image) | Image generation/editing | Low-level continuous latent representation |

The ViT focuses exclusively on understanding, and the VAE focuses exclusively on generation. Since the two representations don't interfere with each other's learning, each achieves optimal performance in its respective role.

Architecture Details: Three Modules

InternVL-U is a 4B parameter model composed of three modules.

Module 1: Visual Understanding Encoder (InternViT-300M)

- Parameters: ~300M

- Structure: 24 Transformer layers, hidden size 1024, 16 attention heads

- Role: Extract high-level semantic features from raw pixels

- Token processing: Encode image patches into 1024 visual tokens → compress to 256 tokens via pixel shuffle

- Resolution: Dynamic High Resolution strategy with 448x448 tile splitting

Module 2: Context Backbone / MLLM (InternVL3.5-2B)

- Parameters: ~2B

- Structure: 28 Transformer layers (Qwen-series LLM backbone)

- Role: Text generation, semantic reasoning, bridge between understanding and generation

- Pattern: ViT-MLP-LLM architecture (InternVL family standard)

This module is the central hub. It processes text tokens and visual tokens in a shared latent space, converting understanding results into conditioning signals for the generation module.

Module 3: Visual Generation Head (Custom MMDiT, 1.7B)

- Parameters: ~1.7B

- Structure: 20 Transformer layers, 12 attention heads

- Key innovations:

- Gating mechanism within attention blocks: First of its kind in MMDiT architecture

- Multimodal Scalable RoPE (MSRoPE): Variable resolution handling

- Flow Matching: Velocity parameterization instead of standard diffusion noise prediction

- VAE: Same VAE as Qwen-Image for conversion between continuous latent space and pixel space

Inter-Module Connection

The unified hidden states produced by the MLLM backbone serve as conditioning signals for the MMDiT generation head. Dual projectors + variance normalization are used to resolve feature distribution differences from the VLM branch.

Four Operating Modes

InternVL-U performs four tasks from a single checkpoint:

| Mode | Input | Output | Example |

|---|---|---|---|

| Text generation | Image + Text | Text | "What amino acids are visible in this image?" |

| Image generation | Text | Image | "A futuristic city at sunset" |

| Image editing | Image + Instruction | Edited image | "Change the sky to sunset colors" |

| Reasoning-based generation | Text | CoT text + Image | "Generate a physics diagram" |

The 4th mode is particularly unique. It decomposes abstract prompts ("generate happiness") through Chain-of-Thought into specific visual elements, emotional intent, and typographic constraints before generating the image.

Training Pipeline: 3 Stages

Stage 1: Generation Head Pre-training

- Steps: 250,000

- Resolution: Fixed 512px

- MLLM: Frozen (only MMDiT trains)

- Data: T2I : Editing = 4:1

- Goal: Train MMDiT to generate images conditioned on MLLM hidden states

Stage 2: Variable Resolution Pre-training

- Steps: 60,000

- Resolution: Variable 512~1024px

- MLLM: Frozen

- Goal: Variable resolution adaptation + strict aesthetic filtering

Stage 3: Unified SFT (Full Model Training)

- Steps: 20,000

- MLLM: Unfrozen (full end-to-end training)

- Data: Generation : Editing : Understanding = 1:1:2

- Loss weights: NTP : VP = 1:20

- Goal: Unified optimization including CoT reasoning data

Data Synthesis Pipeline

One of InternVL-U's strengths is its synthetic data across 5 domains:

- Text-centric: Bilingual (Chinese/English) text rendering

- Science-centric: Physics diagrams, computer science visualizations

- Spatial-centric: Solid geometry, CAD multi-view, 3D rotation

- Humor-centric: Meme generation/editing

- Reasoning-centric (CoT): Chain-of-Thought augmentation for general, knowledge, meme, and scientific images

Benchmark Results

Image Generation (GenEval)

| Model | Parameters | Single Obj | Two Obj | Counting | Colors | Overall |

|---|---|---|---|---|---|---|

| InternVL-U | 4B | 0.99 | 0.94 | 0.74 | 0.91 | 0.85 |

| BAGEL | 14B | -- | -- | -- | -- | 0.82 |

| Janus-Pro | 7B | -- | -- | -- | -- | 0.80 |

| Qwen-Image | 20B | -- | -- | -- | -- | 0.87 |

4B beats 14B (BAGEL) and comes close to 5x larger 20B (Qwen-Image).

Multimodal Understanding

| Benchmark | InternVL-U (4B) | BAGEL (14B) | Janus-Pro (7B) |

|---|---|---|---|

| OCRBench | 83.9 | 73.3 | 48.7 |

| MMMU | 54.7 | 55.3 | 36.3 |

| MME-P | 1607.5 | 1687.0 | 1444.0 |

Leads BAGEL by 10.6 points on OCRBench. Nearly tied on MMMU with just 0.6 point difference.

Image Generation (DPG-Bench)

| Model | Global | Entity | Attribute | Relation | Overall |

|---|---|---|---|---|---|

| InternVL-U | 90.39 | 90.78 | 90.68 | 90.29 | 85.18 |

| BAGEL | -- | -- | -- | -- | 85.07 |

| Janus-Pro | -- | -- | -- | -- | 84.19 |

A 3.5x smaller model outperforms BAGEL on DPG-Bench as well.

Practical Usage

import torch

from PIL import Image

from internvlu import InternVLUPipeline

pipeline = InternVLUPipeline.from_pretrained(

"InternVL-U/InternVL-U",

torch_dtype=torch.bfloat16,

).to("cuda")

# Image understanding

output = pipeline(

prompt="What animals do you see in this photo?",

image=Image.open("cat.jpg").convert("RGB"),

generation_mode="text",

)

# Image generation

output = pipeline(

prompt="A futuristic city at sunset",

height=576, width=1024,

generation_mode="image",

generator=torch.Generator(device="cuda").manual_seed(42),

)

# Image editing

output = pipeline(

prompt="Change the sky to sunset colors",

image=Image.open("photo.jpg").convert("RGB"),

generation_mode="image",

)- License: MIT

- Model weights: HuggingFace

- Code: GitHub

- VRAM: Estimated ~16-24GB at bf16

Why Does 4B Beat 14B?

Two key factors:

1. Decoupled representations eliminate optimization conflicts

BAGEL has 14B parameters, but understanding and generation share representations, interfering with each other's learning. InternVL-U completely separates ViT and VAE, letting each focus on its own role. Fewer parameters achieve higher efficiency.

2. CoT data augmentation

Chain-of-Thought training that decomposes abstract user instructions into concrete visual elements makes a particularly large difference in text rendering and knowledge-intensive generation.

Conclusion

What InternVL-U demonstrates is that "size isn't everything."

- Decoupling is key: Forcing the same representation for understanding and generation hurts both

- 4B can beat 14B: Architecture design matters more than parameter count

- Unified models are now practical: Understanding + generation + editing from a single checkpoint, MIT licensed

- CoT is effective for generation too: Reasoning-based generation opens a new direction

References:

Subscribe to Newsletter

Related Posts

MIRAGE — Do Multimodal AIs Actually "See" Images?

GPT-5.1, Gemini 3 Pro, and Claude Opus 4.5 retain 70-80% of benchmark scores without any image input. A 3B text-only model outperforms all multimodal models and radiologists on chest X-ray benchmarks. Stanford MIRAGE paper review.

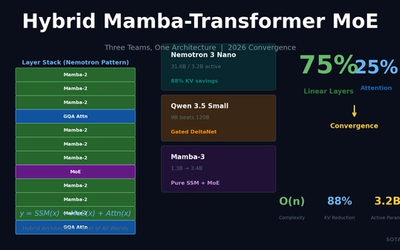

Hybrid Mamba-Transformer MoE: Three Teams, One Architecture -- The 2026 LLM Convergence

NVIDIA Nemotron 3 Nano, Qwen 3.5, and Mamba-3 independently converge on 75% linear layers + 25% attention + MoE. 88% KV-cache reduction, O(n) complexity for long-context processing.

Spectrum: 3-5x Diffusion Speedup Without Any Training -- The Power of Chebyshev Polynomials

CVPR 2026 paper from Stanford/ByteDance. Chebyshev polynomial feature forecasting achieves 4.79x speedup on FLUX.1, 4.56x on HunyuanVideo. Training-free, instantly applicable to any model.