SAE and TensorLens: The Age of Feature Interpretability

Individual neurons are uninterpretable. Sparse Autoencoders extract monosemantic features from model internals, and TensorLens analyzes the entire Transformer as a single unified tensor.

SAE and TensorLens: The Age of Feature Interpretability

In the previous two posts, we:

- Logit/Tuned Lens: Read the model's intermediate predictions

- Activation Patching: Traced which activations are causally responsible for the answer

But here we hit a fundamental problem:

What do the activations we observe and manipulate actually *mean*?

Each dimension of an activation vector corresponds to an individual neuron. But these neurons are polysemantic -- a single neuron fires for academic citations, English dialogue, HTTP requests, and Korean text simultaneously. Clean interpretation at the neuron level is impossible.

This post covers two modern approaches that address this problem:

- Sparse Autoencoder (SAE): Decompose dense activations into sparse, monosemantic features

- TensorLens: Unify the entire Transformer computation into a single high-order tensor

Related Posts

MIRAGE — Do Multimodal AIs Actually "See" Images?

GPT-5.1, Gemini 3 Pro, and Claude Opus 4.5 retain 70-80% of benchmark scores without any image input. A 3B text-only model outperforms all multimodal models and radiologists on chest X-ray benchmarks. Stanford MIRAGE paper review.

InternVL-U: Understanding + Generation + Editing in One 4B Model -- A New Standard for Unified Multimodal AI

Shanghai AI Lab's InternVL-U. A single 4B parameter model handles image understanding, generation, editing, and reasoning-based generation. Decoupled visual representations outperform 14B BAGEL on GenEval and DPG-Bench.

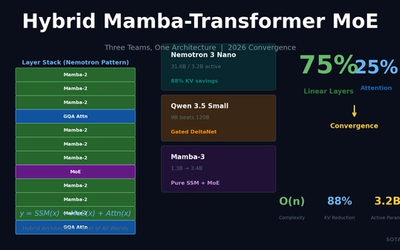

Hybrid Mamba-Transformer MoE: Three Teams, One Architecture -- The 2026 LLM Convergence

NVIDIA Nemotron 3 Nano, Qwen 3.5, and Mamba-3 independently converge on 75% linear layers + 25% attention + MoE. 88% KV-cache reduction, O(n) complexity for long-context processing.