PixArt-α: How to Cut Stable Diffusion Training Cost from $600K to $26K

23x training efficiency through Decomposed Training strategy. Making Text-to-Image models accessible to academic researchers.

PixArt-α: A New Paradigm for Efficient High-Resolution Image Generation

TL;DR: PixArt-α is a DiT-based text-to-image generation model that achieves equal or better quality than Stable Diffusion with 90% less training cost. Key innovations include decomposed training strategy, T5 text encoder, and Cross-Attention optimization.

1. Introduction: The Need for Efficient T2I Generation

1.1 Problems with Existing T2I Models

Training large-scale text-to-image models like Stable Diffusion and DALL-E 2 requires enormous resources:

Related Posts

Models & Algorithms

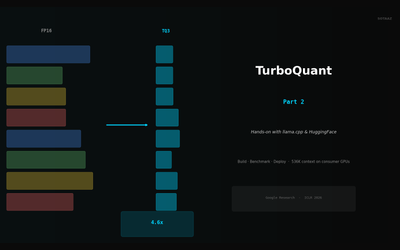

TurboQuant in Practice — KV Cache Compression with llama.cpp and HuggingFace

Build llama.cpp with turbo3, HuggingFace integration, memory calculator, config guide. 536K context on 70B models.

Models & Algorithms

TurboQuant Explained — Google's Extreme KV Cache Compression Algorithm

Compress KV cache to 3-bit with PolarQuant + Lloyd-Max. 4.6x memory savings with zero accuracy loss, no retraining.

Models & Algorithms

Qwen 3.5 Fine-Tuning Practical Guide — Build Your Own Model with LoRA

Complete guide to fine-tuning Qwen 3.5 with LoRA/QLoRA. From 8GB GPU QLoRA setup to Unsloth optimization, GGUF conversion, and Ollama deployment.