LLM Reasoning Failures Part 1: Structural Limitations -- Scaling Won't Fix These

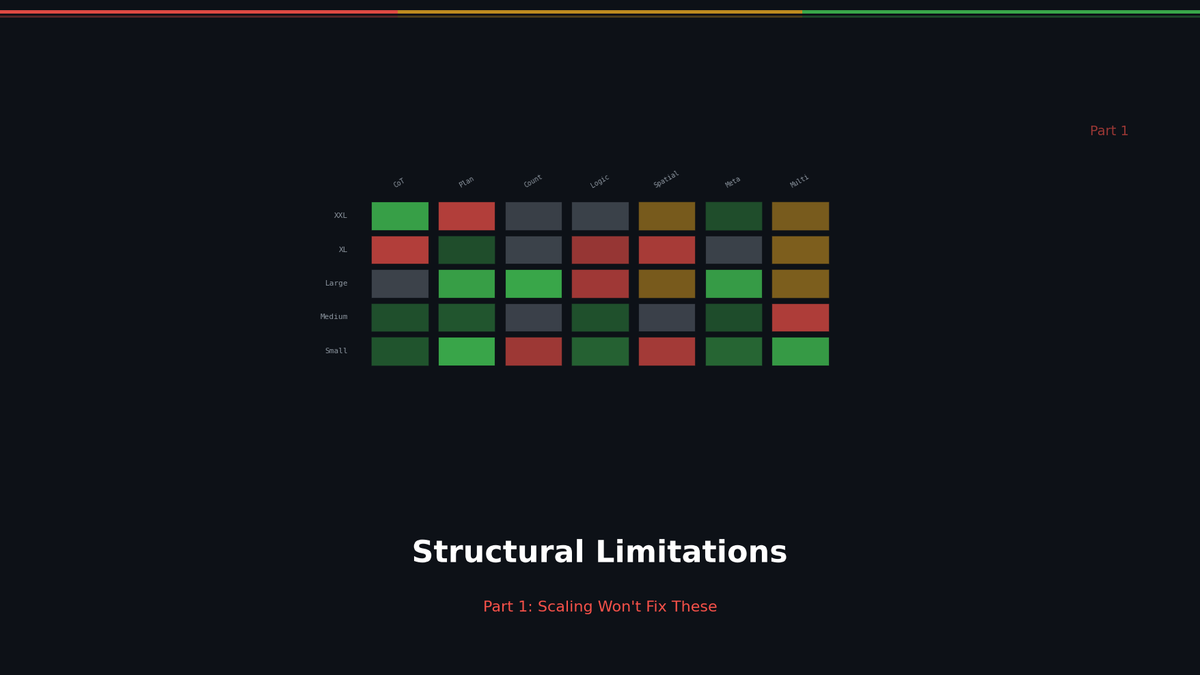

Reversal Curse, Counting, Compositional Reasoning — fundamental Transformer failures tested across 7 models.

LLM Reasoning Failures Part 1: Structural Limitations -- Scaling Won't Fix These

This is the first installment in our series dissecting LLM reasoning failures. In this post, we cover three fundamental limitations that persist no matter how much you scale the model or expand the training data.

- The Reversal Curse

- Counting Failures

- The Compositional Reasoning Wall

These failures stem from the Transformer architecture itself. Prompt engineering and scaling cannot fundamentally resolve them. Drawing from the survey by Song, Han, and Goodman (2025), we present hands-on experiments across 7 models alongside the theoretical analysis.

1. The Reversal Curse

What the Paper Says

If a model has learned "A is B," can it infer "B is A"? Song et al. (2025) call this failure the **Reversal Curse**. The Transformer's next-token prediction objective (unidirectional training) strengthens weights only in the "A to B" direction. "B to A" cannot be inferred unless it was separately learned.

Critically, this problem resists scaling due to Zipf's law. The sentence "Tom Cruise's mother is Mary Lee Pfeiffer" may appear in training data, but "Mary Lee Pfeiffer's son is Tom Cruise" is far rarer. When a celebrity's name is the subject, data is abundant; when an obscure person's name is the subject, data is scarce. This distributional asymmetry is structural.

Related Posts

Self-Evolving AI Agents — The New Paradigm of 2026

GenericAgent, Evolver, Open Agents — comparing 3 self-evolving agent frameworks that learn, adapt, and grow without human coding.

Build Your Own LLM Knowledge Base — A Karpathy-Style Knowledge System

Complete guide to building a permanent personal knowledge system with Obsidian + Claude Code. Wiki + Memory dual-axis architecture.

Why Karpathy's CLAUDE.md Got 48K Stars — And How to Write Your Own

One markdown file raised AI coding accuracy from 65% to 94%. Analyzing Karpathy's 4 rules and practical writing guide.