Diffusion LLM Part 1: Diffusion Fundamentals -- From DDPM to Score Matching

Forward/Reverse Process, ELBO, Simplified Loss, Score Function -- the mathematical principles of diffusion models explained intuitively.

Diffusion LLM Part 1: Diffusion Fundamentals -- From DDPM to Score Matching

To understand Diffusion-based language models, you first need to understand Diffusion models themselves. In this post, we cover the core principles of Diffusion that have been proven in image generation. There is some math involved, but I have included intuitive explanations alongside the formulas, so you can follow the flow even if the equations feel unfamiliar.

This is the first installment of the Diffusion LLM series. See the Hub post for a series overview.

The Core Idea Behind Diffusion

The idea behind Diffusion models is surprisingly simple.

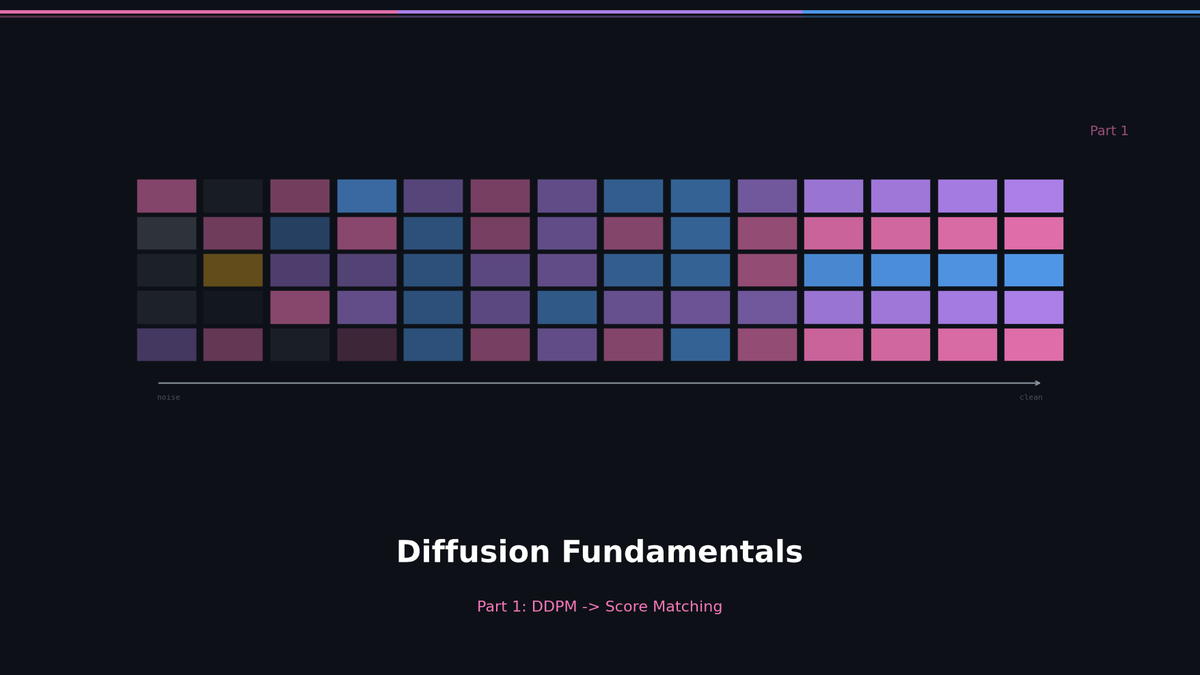

- Gradually add noise to clean data until it becomes pure random noise (Forward Process)

- Train a neural network to learn the reverse -- how to recover clean data from noise (Reverse Process)

Think of dropping a single drop of ink into water. It gradually spreads out until the color is uniform. The forward process is this diffusion. The reverse process is recovering the original shape of the ink drop from the uniformly colored water. Physically this is impossible, but the key insight of Diffusion models is that a neural network can learn this "time reversal."

Forward Process: Adding Noise

The forward process starts from the original data x_0 and progressively adds Gaussian noise over T steps.

Noise addition at each step t:

q(x_t | x_{t-1}) = N(x_t; sqrt(1 - beta_t) * x_{t-1}, beta_t * I)

Here, beta_t is a small positive value called the noise schedule (typically increasing linearly from 0.0001 to 0.02). At each step, the original signal is scaled down by sqrt(1 - beta_t), and noise of magnitude beta_t is added.

When T is large enough (typically T=1000), x_T becomes nearly pure Gaussian noise N(0, I). All information from the original data has been completely destroyed.

There is an important mathematical trick. If we define alpha_t = 1 - beta_t and alpha_bar_t = alpha_1 * alpha_2 * ... * alpha_t, we can skip all intermediate steps and jump directly from x_0 to any arbitrary time step x_t:

q(x_t | x_0) = N(x_t; sqrt(alpha_bar_t) * x_0, (1 - alpha_bar_t) * I)

This can be rewritten using the reparameterization trick:

x_t = sqrt(alpha_bar_t) * x_0 + sqrt(1 - alpha_bar_t) * epsilon, epsilon ~ N(0, I)

This formula is crucial for training. At each training step, we can randomly sample t from 1 to T and generate x_t at that noise level in a single operation.

Reverse Process: Removing Noise

The reverse process runs the forward process in the opposite direction. Starting from pure noise x_T, it removes noise step by step to recover x_0.

p_theta(x_{t-1} | x_t) = N(x_{t-1}; mu_theta(x_t, t), sigma_t^2 * I)

Here, mu_theta is the mean predicted by the neural network. The model takes the current noisy state x_t and the time step t as input, and predicts the mean of x_{t-1} -- one denoising step back.

The key question: what exactly should the model learn to predict?

There are three options:

- Predict x_0 directly (the original data)

- Predict mu directly (the mean of the reverse step)

- Predict epsilon (the added noise itself)

Ho et al. (2020) in the DDPM paper found that predicting epsilon works best. Intuitively, asking the model "what noise was mixed in here?" turns out to be an easier problem than asking "what was the original?"

Training Objective: From ELBO to Simplified Loss

The theoretical training objective for Diffusion models is the ELBO (Evidence Lower BOund). Like VAEs, we maximize a lower bound on the log-likelihood of the data, log p(x_0).

L_ELBO = E[-log p(x_T)] + sum_{t=2}^{T} E[KL(q(x_{t-1}|x_t, x_0) || p_theta(x_{t-1}|x_t))] + E[-log p_theta(x_0|x_1)]

This expression breaks down into three parts:

- L_T: Whether x_T is close to the prior N(0,I) (no trainable parameters)

- L_{t-1}: Whether each reverse step is close to the true posterior

- L_0: Final reconstruction quality

Mathematically, q(x_{t-1}|x_t, x_0) is called the forward posterior -- it is the conditional probability of the forward process inverted via Bayes' rule. This is the "ground truth," and the model's prediction p_theta is trained to match it.

The discovery by Ho et al.: replacing this complex ELBO with the following simple loss works just as well (and even better):

L_simple = E_{t, x_0, epsilon}[|| epsilon - epsilon_theta(x_t, t) ||^2]

In plain terms: "Minimize the difference between the actual noise epsilon mixed into x_t at a random time step t, and the noise epsilon_theta predicted by the model."

This is what makes DDPM training remarkably simple.

Training algorithm:

- Sample x_0 from the training data

- Randomly sample t from 1 to T

- Sample noise epsilon ~ N(0,I)

- Compute x_t = sqrt(alpha_bar_t) * x_0 + sqrt(1 - alpha_bar_t) * epsilon

- Minimize the MSE between the model's prediction epsilon_theta(x_t, t) and the actual epsilon

The Connection to Score Matching

Around the same time as DDPM, Song and Ermon's score-based models arrived at the same destination via a different route.

The score function is the gradient of the log probability density:

s(x) = nabla_x log p(x) = (1/p(x)) * nabla_x p(x)

Mathematically: p(x) is the probability density function of the data, and nabla_x denotes the partial derivative with respect to x. Differentiating log p(x) gives (1/p(x)) * nabla_x p(x). This is a vector that points in the direction where probability density increases most rapidly from the current position x.

Intuitively: imagine data points scattered across a 2D plane. Some regions are dense with data (high probability), others are nearly empty (low probability). The score function is an arrow at each position pointing toward "where the data is concentrated." Starting from a noisy location and following these arrows leads you closer to the original data.

This connects directly to the Diffusion reverse process. Starting from a noisy x_t and taking small steps in the score function direction gradually brings you closer to a clean x_0.

The key idea of score matching: instead of modeling the data distribution p(x) directly, learn the score function s(x). To work with p(x) directly, you would need to compute the normalization constant Z = integral p_unnormalized(x) dx, which is practically impossible in high dimensions. But when you take the gradient of log p(x), Z is a constant and vanishes under differentiation. This is the fundamental reason why the score function is easier to learn.

Song et al. (2021) generalized the DDPM forward process as an SDE (Stochastic Differential Equation):

dx = f(x, t)dt + g(t)dw (Forward SDE)

Here, f is the drift coefficient, g is the diffusion coefficient, and w is a Wiener process (Brownian motion).

The remarkable result: there exists a reverse-time SDE corresponding to this forward SDE, and if you know the score function, you can run time backward:

dx = [f(x,t) - g(t)^2 * nabla_x log p_t(x)]dt + g(t)dw_bar (Reverse SDE)

DDPM's epsilon-prediction and score matching are essentially the same thing expressed in different languages:

nabla_x log p_t(x) = -epsilon / sqrt(1 - alpha_bar_t)

Noise prediction = score estimation. Two sides of the same coin.

Why This Cannot Be Directly Applied to Text

The Diffusion models described so far operate in continuous space. Image pixels are real-valued numbers between 0 and 255, and Gaussian noise can be naturally added and removed.

But text consists of discrete tokens. What happens if you add Gaussian noise to the word "cat"? "cat" + 0.3 is meaningless.

There are two approaches to solving this problem:

- Map tokens into continuous space (embeddings) and then apply Diffusion (Continuous-space approach)

- Define Diffusion directly in discrete space (Discrete Diffusion)

LLaDA adopted the second approach. Instead of Gaussian noise, it uses token masking. This is the topic of Part 2.

Key Takeaways

| Concept | Description |

|---|---|

| Forward Process | Gradually adds noise to data. q(x_t given x_{t-1}) |

| Reverse Process | Recovers data from noise. p_theta(x_{t-1} given x_t) |

| Reparameterization | Any time step x_t can be generated directly from x_0 in one shot |

| Simplified Loss | Train the model to predict the added noise epsilon |

| Score Function | Gradient of the log data distribution. Equivalent to noise prediction |

| SDE Framework | Mathematical framework unifying DDPM and Score matching |

| Limitation | Continuous-space only -- cannot be directly applied to discrete tokens (text) |

What Comes Next

In Part 2, we look at how to bring this continuous-space Diffusion into the world of discrete tokens. We will explore D3PM's Transition Matrix, Absorbing States ([MASK] tokens), and how MDLM made all of this practical for text generation.

References

- Ho, Jain, Abbeel. "Denoising Diffusion Probabilistic Models." NeurIPS 2020.

- Song et al. "Score-Based Generative Modeling through Stochastic Differential Equations." ICLR 2021.

- Sohl-Dickstein et al. "Deep Unsupervised Learning using Nonequilibrium Thermodynamics." ICML 2015.

- Luo. "Understanding Diffusion Models: A Unified Perspective." arXiv:2208.11970, 2022.

Subscribe to Newsletter

Related Posts

Self-Evolving AI Agents — The New Paradigm of 2026

GenericAgent, Evolver, Open Agents — comparing 3 self-evolving agent frameworks that learn, adapt, and grow without human coding.

Build Your Own LLM Knowledge Base — A Karpathy-Style Knowledge System

Complete guide to building a permanent personal knowledge system with Obsidian + Claude Code. Wiki + Memory dual-axis architecture.

Why Karpathy's CLAUDE.md Got 48K Stars — And How to Write Your Own

One markdown file raised AI coding accuracy from 65% to 94%. Analyzing Karpathy's 4 rules and practical writing guide.